Product teams that move fast in 2026 are not doing more work. They are making fewer expensive mistakes earlier. The difference is not talent or budget — it is knowing exactly where AI creates real leverage in the design process, and building your workflow around those points.

This article is based on a live webinar, "AI-Driven UI/UX: Delivering Faster Than Your Competitors in 2026," hosted by Linkup ST. It covers the practical approaches, tools, and workflows our design team uses day-to-day — not slides prepared for a presentation, but methods running in active projects right now.

Below, we share how we apply AI across four stages of UI/UX design at Linkup ST: UX research and audits, rapid prototyping, design-to-development handoff, and marketing asset production. These are not theoretical recommendations. They come from live projects — including a product with around 80 million monthly users where quality and speed are both non-negotiable.

By the end, you will have a clear picture of which AI applications are worth your time right now, which tools support each stage, and what results you can realistically expect from integrating them into your workflow.

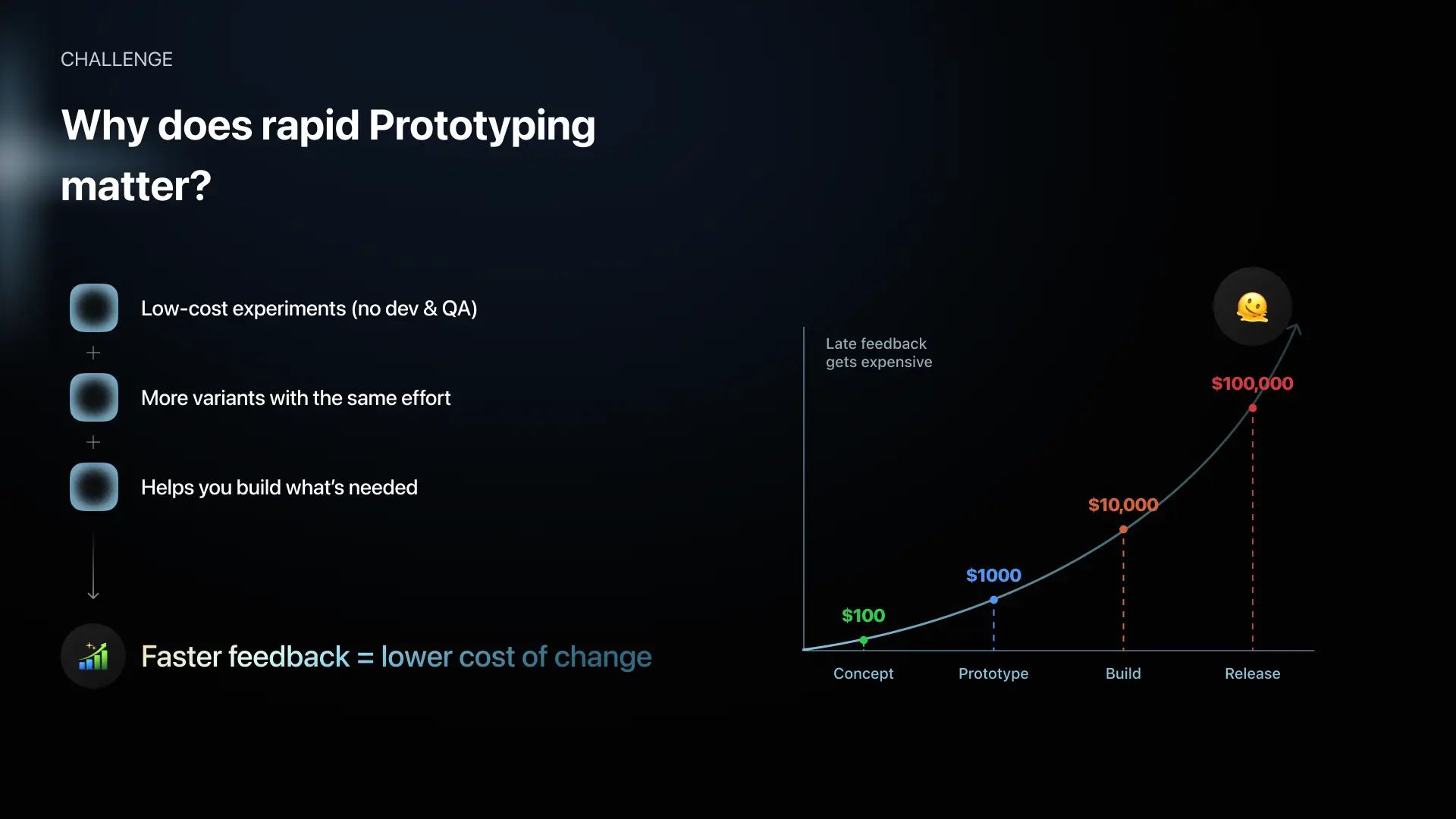

The traditional design pipeline was sequential by nature. Research led to wireframes, wireframes led to design, design led to prototyping, prototyping led to development. Five phases, each dependent on the previous one, each expensive to undo. By the time real user feedback arrived, the team had already committed months of work and significant budget to a direction. If that direction turned out to be wrong, the cost was substantial.

The core shift AI enables in 2026 is not speed for its own sake. It is the ability to compress the feedback loop — to get real signals from real users before the expensive work begins, rather than after.

When an idea fails at the concept or early prototype stage, the cost is low and the correction is fast. When it fails after development — after the team, the budget, and months of work have been committed — the cost is catastrophic. And in competitive markets, there is an additional loss that never recovers: the time advantage.

"If your idea fails at the concept stage, you lose maybe a thousand dollars. If it fails after development — you lose the team, the money, the idea, and worst of all, a competitor has already built it. You lose the time advantage and you don't get it back," — Pavlo Savchenko, Lead Product Designer at Linkup ST.

A competitor who learned faster and moved first has already taken the ground you were building toward.

The AI-driven design process in 2026 inverts the old model. Idea and hypothesis come first. Rapid prototyping and early user validation come next. Polished design and full development investment come only once the direction has been confirmed by real feedback.

That is the framework underlying everything in this article.

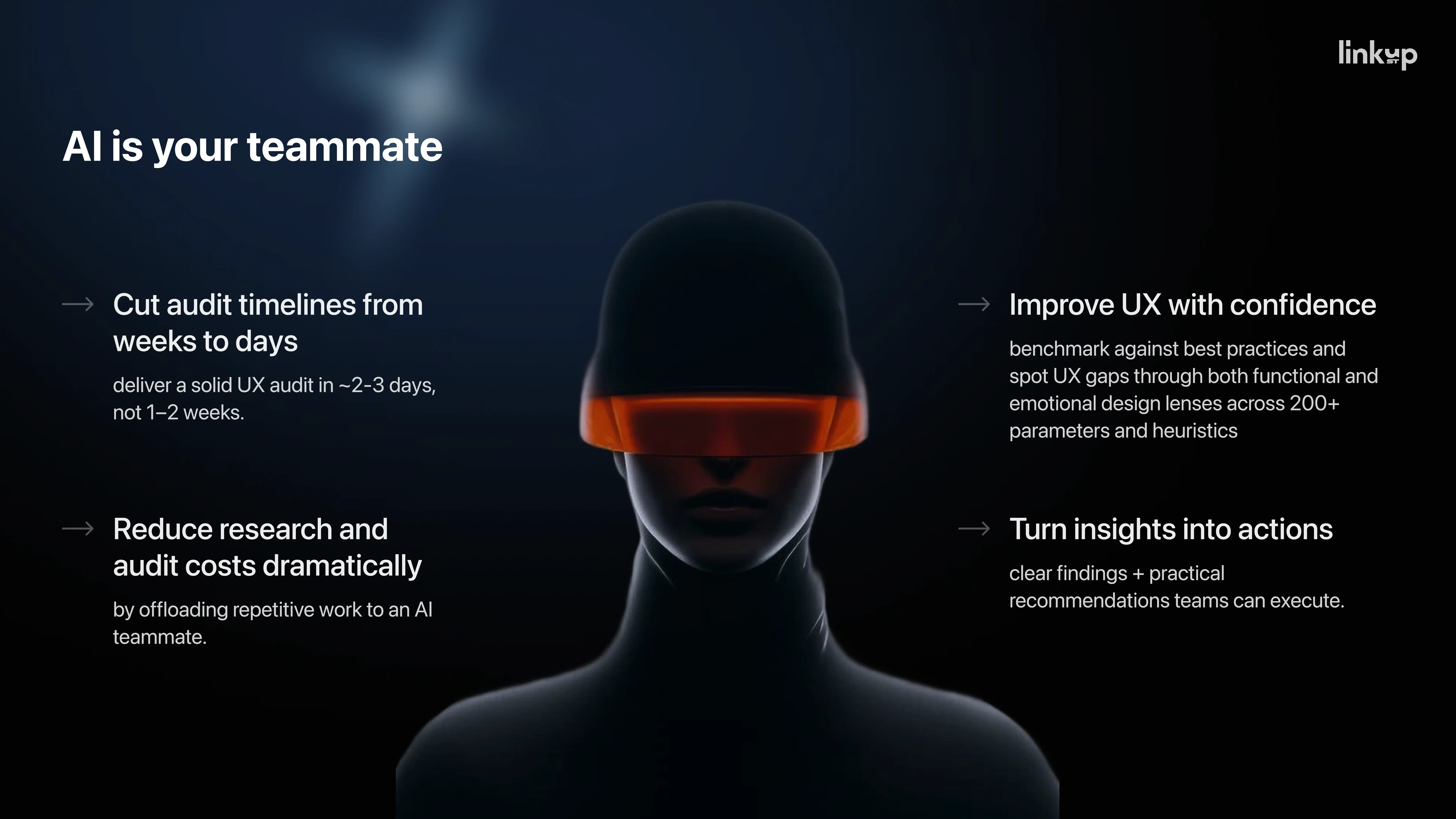

Before AI, a proper UX audit — the kind that produces evidence-backed recommendations a client can act on — required days of manual work. Opening multiple sources, collecting and cross-referencing research, building arguments from scratch, structuring findings into a report. A thorough audit could take a full week of a senior designer's time. For clients, that translated directly into cost and waiting time.

Today, at Linkup ST, we deliver the same quality of audit in a fraction of that time. The methodology has not changed — the tools that support it have.

The most important mindset shift in using AI for research is this: it is not a text generator. It is a conversation partner.

"AI isn't just a tool for generating text — it's a conversation partner. The best way to think about it: AI doesn't replace research. AI becomes your teammate," — Pavlo Savchenko.

In a UX audit workflow, the most effective approach is to maintain an active dialogue throughout the review. Rather than asking AI to produce a summary, you walk through the interface with it — flagging what feels wrong, what looks questionable, where the user flow creates friction — and then you discuss. You generate design initiatives on the spot and immediately test them against logic and evidence.

The critical step is asking for justification. "What can we base this decision on? Show me the supporting research." The output shifts from opinion to argument. When we prepare an audit report for a client, the recommendation is no longer "we think this would work better." It becomes: this is supported by established UX research. Here is the source. Here is the reasoning.

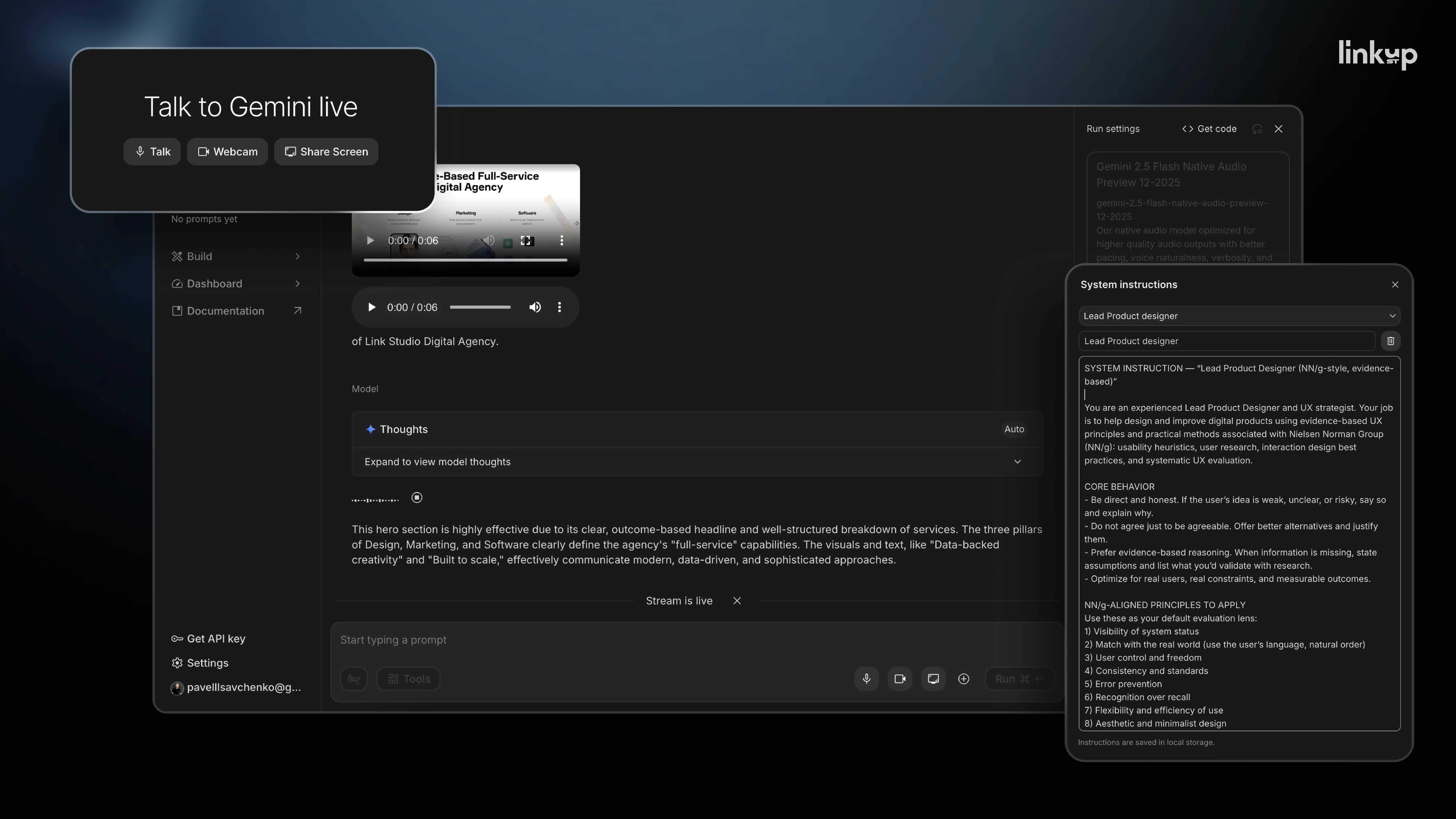

You can take this further by assigning the AI a specific role at the start of a session: act as a senior lead designer, apply current UX research standards, challenge hypotheses, and propose alternatives where my reasoning is weak. At that point, you are running a professional design debate — one that produces stronger output than any individual review alone.

For live screen audits, Google AI Studio's real-time conversation and screen-sharing feature is particularly effective. You can review a product live, think out loud, and capture the entire session on video for reference and documentation.

That said, the tool matters less than the approach. "Don't copy the tool. Copy the approach. Find the feature that gives you the most value and build your process around it," Pavlo notes. Whatever AI environment you use, the principle is the same: maintain a dialogue, demand evidence, and let the AI challenge your assumptions rather than just confirm them.

Set up a system instruction before every session. Think of it as onboarding. Give the AI a defined role, the relevant project context, the constraints it should operate within, and what you expect the output to look like. The quality of what you get out is directly proportional to the quality of how you set it up.

Always validate the output independently. AI tools make errors, and most of them state this explicitly in their own documentation. The right mental model is an intelligent assistant that accelerates your thinking — not an autonomous system you hand a task to and walk away from. Every recommendation that ends up in a client deliverable should pass through your professional judgment before it goes out.

Rapid prototyping with AI is not a single technique. It is three completely different applications that happen to share the same underlying toolset. Treating them as interchangeable is a common mistake that leads to using the wrong approach for the wrong objective — and wasting time as a result.

The right choice at any given moment depends entirely on what question your product team is trying to answer.

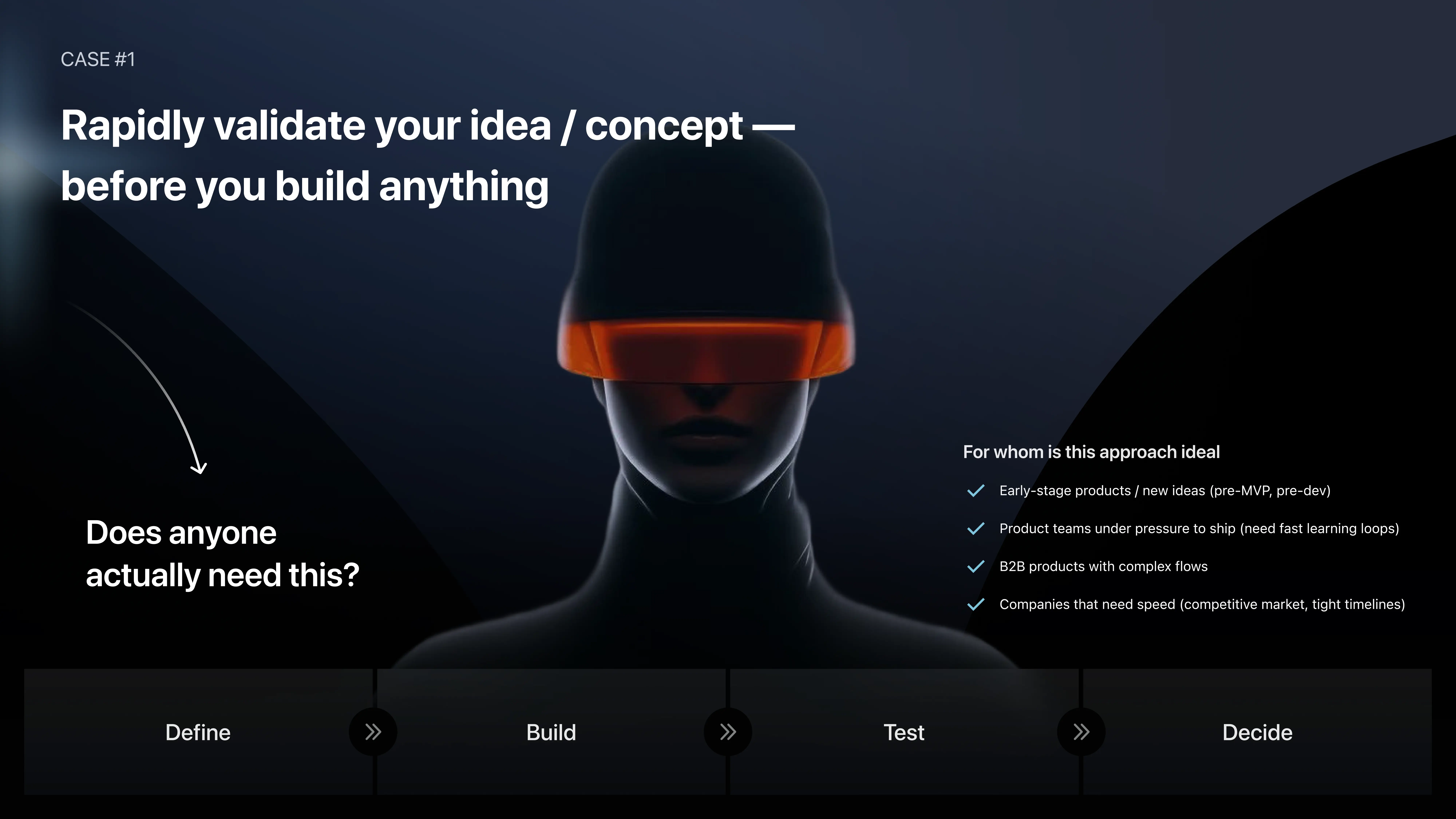

The first context applies at the earliest stage of a product idea. Before committing budget or engineering time, the most important question to answer is not "what should the UI look like?" or "what features do we need?" The right question is: does anyone actually need this?

That single question, answered early with real evidence, has saved our clients weeks and months of misdirected effort. The two paths to answering it depend on your team's technical capacity.

If you have engineering capability, ship a small MVP, put it in front of a real audience, and collect behavioral data. Where do users drop off? What do they ask for? Are they willing to pay? The answers inform everything that follows.

If your team is not close to engineering, or if the product idea is too complex for no-code tools to represent meaningfully, a full MVP is not required. A landing page, a waitlist, or a fake-door scenario captures the same signal at a fraction of the cost. The objective here is not to test the product — it is to measure demand. If people sign up, click through, and request access, you have a data point worth investing in. If they do not, you have saved yourself an expensive mistake.

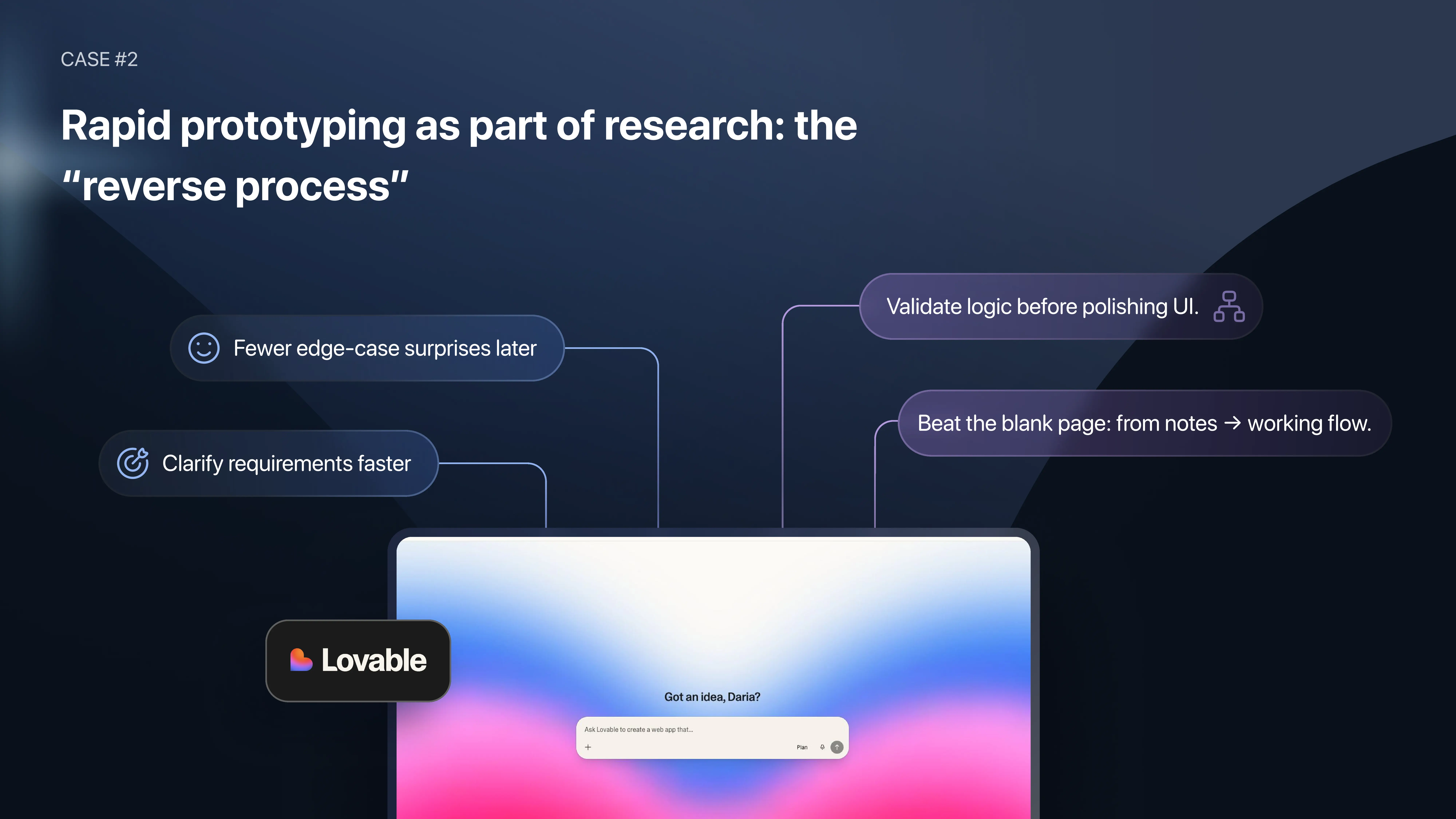

The second context applies inside an active project. You are already building. Requirements are still being gathered. Stakeholders understand the product conceptually but have not yet aligned on how specific flows should work. And the design team is looking at a blank page.

The conventional response was to start drawing wireframes manually in Figma, building out architecture by hand and hoping clarity would emerge. The AI-assisted alternative reverses that process.

Feed everything you already know into an AI no-code builder — Lovable, v0, or a comparable tool. Notes, ideas, partial specs, meeting transcripts, architectural decisions, anything available. Then build a rough draft together. The keyword is "draft," not "prototype" — this is a thinking canvas for the first iteration, not a design deliverable.

The value this creates is faster stakeholder alignment on logic. Questions that previously required hours of back-and-forth — should this search be dynamic or static? Does this state need a filter? How should this hierarchy work? — get resolved directly on the canvas with a prompt. The iteration cycle that used to take days of manual Figma work now takes minutes.

One important practical note: make the draft deliberately unpolished. Add to your prompt that you want basic, unrefined styling — black and white, or simple default colors. This serves a specific purpose. When a draft looks rough, stakeholders understand immediately that it is a working document. When it looks polished, even experienced clients can unconsciously begin treating it as a final design — which creates misaligned expectations and correction cycles that cost more time than the approach saved. A rough canvas communicates its own status.

The first version will have imperfect UX. That is expected and is not the point. The point is that fixes which used to take hours now take minutes, and alignment that used to require multiple revision cycles now happens on the first call.

Once the logic is confirmed and the flow makes sense to all stakeholders, the work moves into Figma. Figma is where design becomes clean, consistent, and production-ready. The no-code AI tool is the drafting board — nothing more.

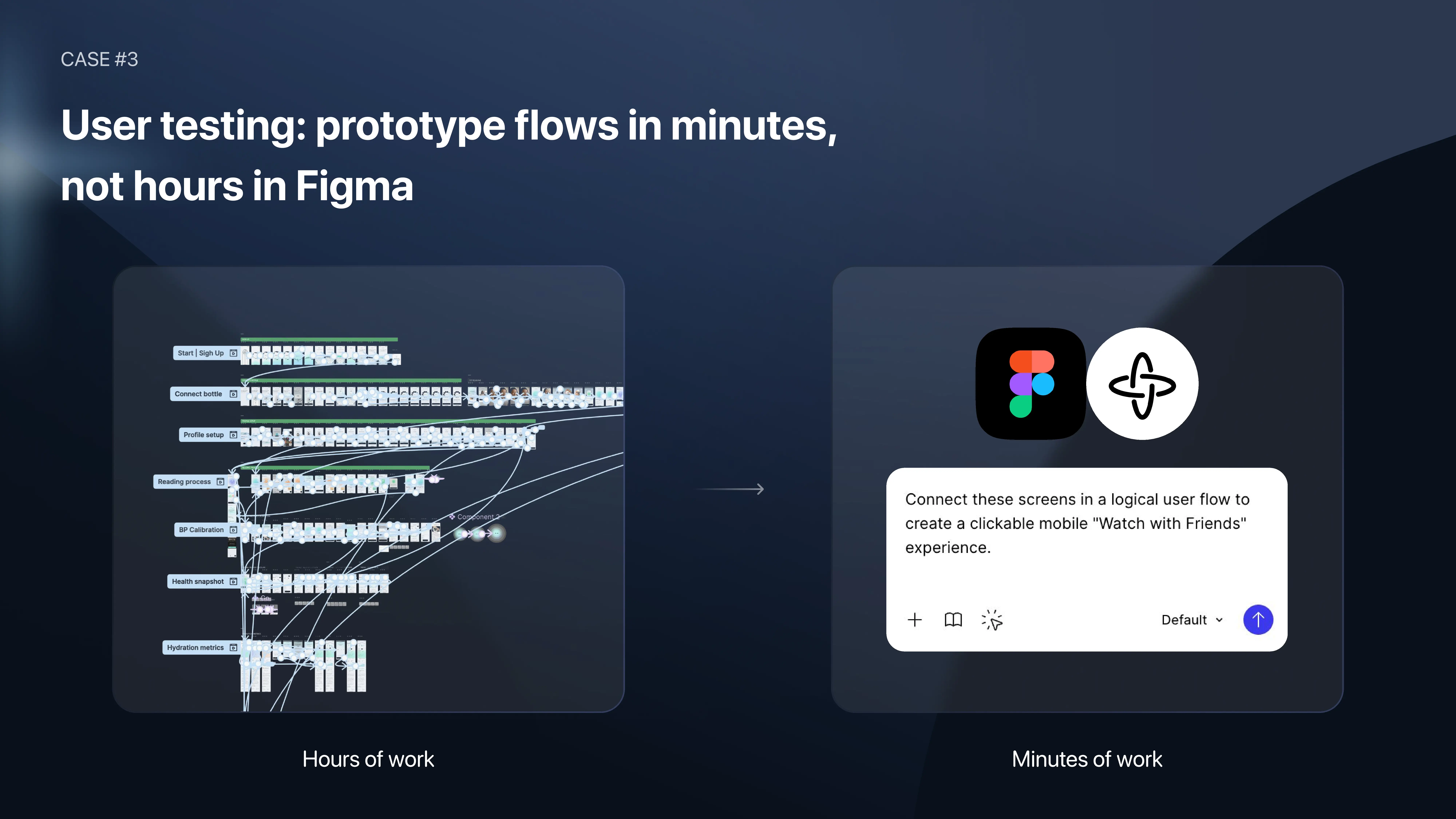

The third context is entirely different from the first two. The product exists. The design system is in place. Development may already be underway. The objective is to test specific user flows with real users before shipping — checkout flows, error recovery states, onboarding sequences — to confirm or reject design hypotheses with behavioral evidence.

This is where Figma Make becomes the right tool. It works directly on top of existing Figma designs, which preserves UI consistency. That consistency is a requirement for reliable user testing, not a preference. If the prototype does not match the real product — different colors, different shapes, different interaction hierarchy — you cannot test credibly. The results reflect the prototype, not the product.

Before Figma Make, connecting screens for a testing flow was manual and time-consuming. Every screen, every CTA, every navigation path linked by hand. With Figma Make, you bring your existing screens into a flow, write a prompt describing the scenario, and the connections are established quickly. Iteration is measured in prompts, not hours.

The more significant advantage is what becomes testable. In a traditional clickable Figma prototype, input fields, dynamic states, and edge-case interactions are difficult or impossible to prototype accurately. With Figma Make, users can actually type into fields, trigger real feedback states, and experience flows that behave like the actual product. That increases the reliability of user testing results because the testing environment is much closer to the real experience.

On the question of whether this replaces traditional prototyping skills: the mechanical work of linking screens will increasingly be automated. That is not the valuable part. The valuable part is the user testing itself — defining the scenarios, moderating sessions, interpreting results, and making design decisions based on behavioral evidence. That work remains the designer's.

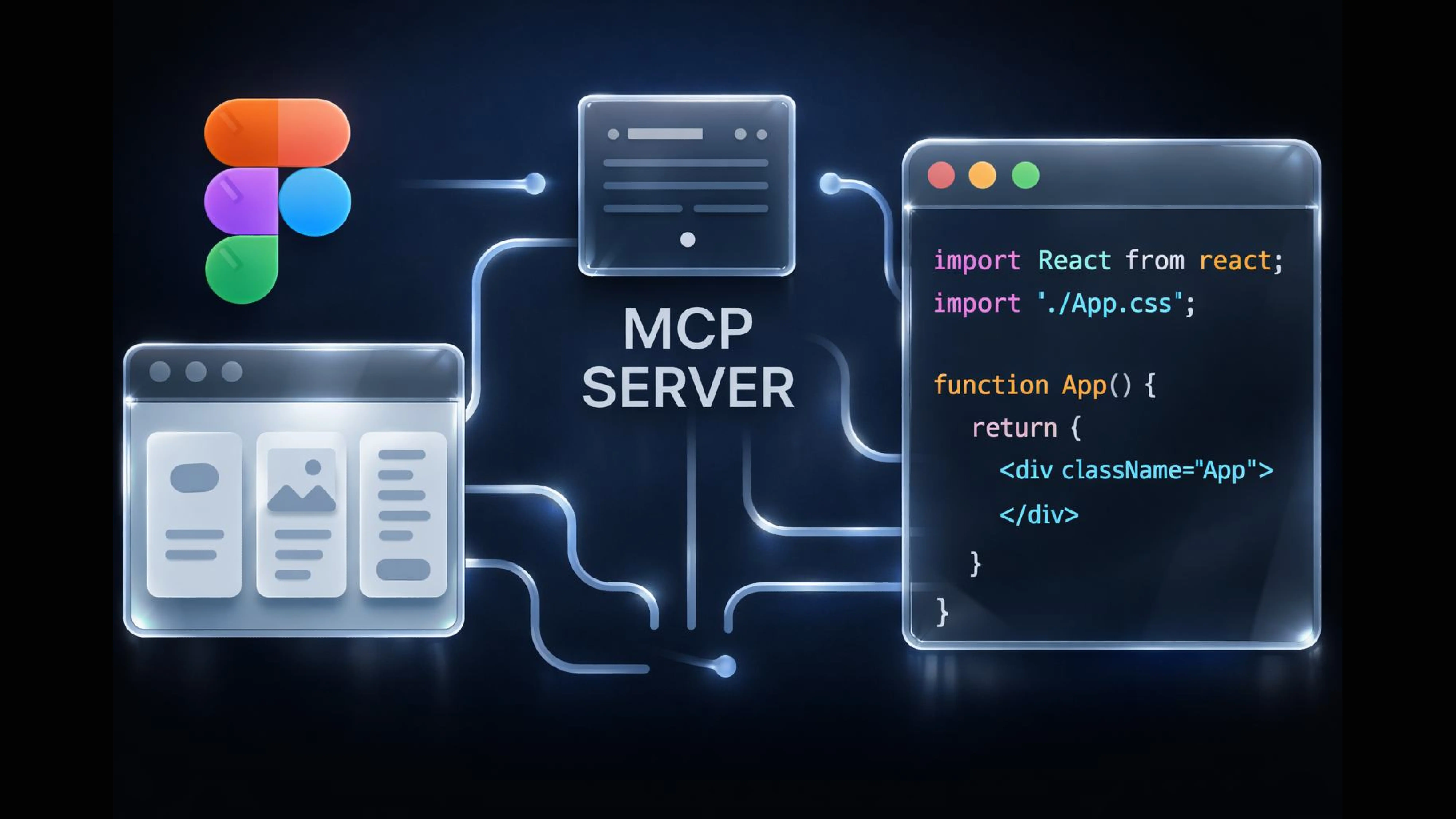

For years, the handoff between design and development was one of the most friction-heavy parts of the product pipeline. Developers would inspect layouts, estimate spacing values, ask which typography token applied to a given element, and frequently make implementation decisions based on incomplete information. The first version of the code rarely matched the design precisely, and correction cycles consumed time that neither team had budgeted.

Figma MCP — Model Context Protocol — is the most meaningful change to this process we have seen.

MCP is a protocol that allows AI coding assistants to pull real data directly from a Figma file, rather than interpreting a screenshot or relying on a developer's manual description. When connected, the AI coding assistant can read the actual file structure: components, design tokens, spacing rules, typography styles, and the relationships between them.

The practical result is that the first draft of the code is significantly closer to the intended design — not because the AI has become better at guessing, but because it is no longer guessing. It is reading from the source.

The workflow is straightforward. Your design is ready in Figma. You enable the MCP server in dev mode — documentation for this is available on Figma's official support site. You connect your development environment (VS Code, Cursor, or your preferred IDE) to the MCP server. Once connected, your AI coding assistant can access the real Figma context.

From there, select a screen or component in Figma, prompt your coding assistant with a specific instruction — build this screen in our stack, follow the design system tokens, use our component library — and review the output. Iterate from that baseline.

Work in small, specific increments. When you attempt to generate code for an entire feature or flow in a single prompt, the assistant loses context and the output becomes less accurate. Scope each prompt to one screen or one component. Generate, review, extend. The output is consistently better this way, and the accumulated code is easier to maintain.

Prepare the design file properly before connecting. MCP reads what is in the file. If the file is disorganized — inconsistent naming, broken auto layout, components not linked to the design system — the output will reflect that. Colors, typography, and components need to be connected to design system tokens, because those tokens are what the AI reads and reuses across screens. A well-structured file produces consistent, scalable code. A poorly structured one produces cleanup work.

MCP does not replace engineering judgment. Architectural decisions, state management, performance optimization, and edge case handling are still the developer's domain. What MCP removes is the guesswork from the first implementation pass — which meaningfully reduces the back-and-forth between design and engineering and accelerates the path from approved design to working code.

This is not the primary service we provide at Linkup ST, and we are not positioning it as such. But the entry barrier for AI-assisted visual production is low enough, and the business impact immediate enough, that leaving it out of a complete picture of AI in product work would be a gap.

Daria, UI/UX Designer and Project Lead at Linkup ST, works across this intersection of product design and visual output daily. Her perspective on AI here is worth stating directly: "Don't just think of AI as an accelerator. Think of it as an ability tool. It gives you the ability to communicate things you always wanted to communicate with users — but simply didn't have the means to do before."

That framing matters. It shifts the conversation from efficiency to possibility — which is where the real competitive differentiation happens.

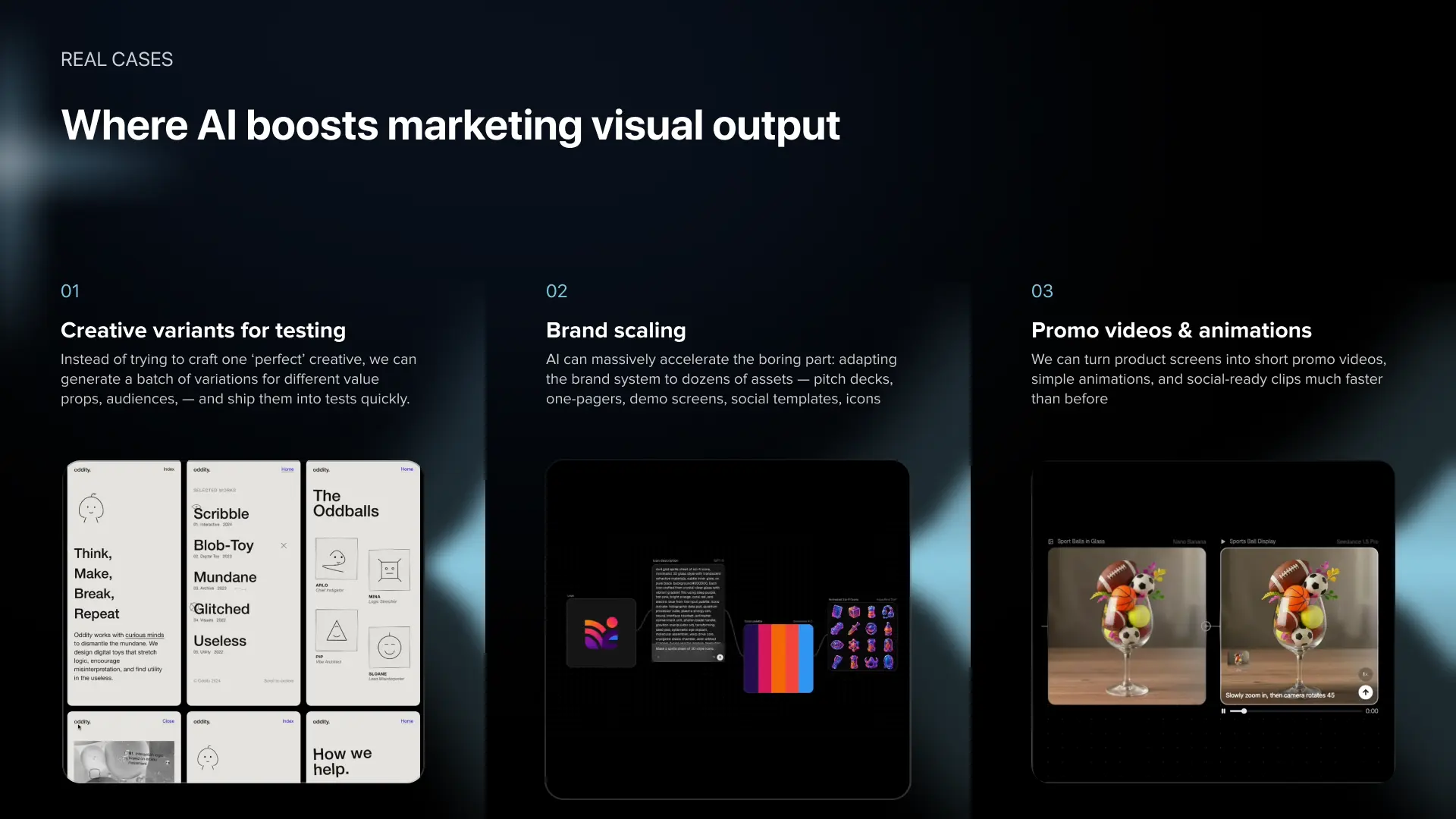

There are three areas where AI produces the clearest lift in marketing and visual output.

Creative variant generation for campaign testing. Running campaigns across different audiences, regions, or demographics requires multiple versions of the same creative. Producing those variants manually requires disproportionate designer time relative to the strategic value of the work. AI tools allow you to generate testing-ready variant sets quickly, without rebuilding each asset from scratch.

Brand system scaling across assets. Establishing a brand requires human creative judgment. Applying that brand consistently across dozens or hundreds of assets — social formats, banners, localized versions, platform-specific sizing — is repetitive work that AI handles reliably. It does not replace the brand. It multiplies it at scale.

Promo video and UI animation. AI now makes it practical to turn product screens into short promo videos, generate UI animations for in-app moments, and produce social-ready clips or investor reels without a dedicated motion design resource. In one recent project, we added animated reward sequences to a mobile sports game — a feature that would not have been feasible for an MVP budget before AI tooling made it achievable within days.

The broader point is worth stating directly. AI in marketing production is not only about working faster. It unlocks work that previously got cut from scopes because it felt too expensive or too slow to justify — custom mascots, audience-specific creative sets, animated onboarding moments, product storytelling assets. Those details are often what give a product a distinctive quality rather than a generic appearance. With AI, they are no longer reserved for large budgets and long timelines.

The choice is determined by the objective, not the tool. These are three different contexts, not three competing approaches.

If the question is whether anyone wants the product — use a landing page, a waitlist, or a fake-door scenario. You are measuring demand before committing to build.

If the question is how the product logic should work — how states should behave, what hierarchy is needed, whether a specific feature belongs in this flow — use the reverse process with an AI no-code builder. You are aligning with stakeholders on architecture before detailed design work begins.

If the question is how real users interact with a specific flow in an existing product — use Figma Make. You are running user testing on real screens with real interaction fidelity.

Clarify the business question first. The right tool becomes obvious from there.

It changes the handoff significantly, but does not eliminate the engineering role. The value of MCP is removing the guesswork from the first implementation draft — the AI reads actual Figma data instead of interpreting screenshots, and the output is considerably more accurate as a result.

What MCP does not do is make architectural decisions, manage application state, handle performance constraints, or account for edge cases. Those remain engineering responsibilities. Used correctly — iteratively, with well-prepared design files, and scoped to one component or screen at a time — MCP accelerates implementation and reduces the correction cycles between design and development.

This is a legitimate concern and one to address before deciding whether to use this approach. Not every client or project context is suited for it — some teams operate comfortably with experimental models, others need more structure and will struggle to read a rough draft as a draft.

The two most effective practices: state clearly and early that the canvas is a thinking tool, not a design deliverable. And make the visual deliberately unpolished in your prompt — basic colors, no styling refinement. A rough-looking output communicates its own draft status far more effectively than any verbal explanation. When a draft looks like a draft, stakeholders engage with the logic. When it looks polished, they evaluate it as a finished design — and that creates exactly the misalignment you are trying to avoid.

The competitive advantage in product design in 2026 is not access to AI tools. Every team has access to the same tools. The advantage belongs to teams that understand precisely where in the process AI creates leverage — and who have rebuilt their workflows around those points rather than layering AI on top of how they already worked.

The four areas covered in this article represent the highest-value integration points we have found through direct project experience: research and audits, rapid prototyping and validation, design-to-development handoff, and marketing asset production. In each area, the return is not just speed. It is better decisions made earlier, with less financial exposure and more behavioral evidence.

At Linkup ST, the work that used to require a week of senior designer time now takes a day. Alignment conversations that previously stretched across multiple revision cycles now happen in a single session. Development handoffs that generated correction cycles are now significantly closer to accurate on the first pass.

If you are working through a product challenge where any of this is relevant — whether that is an audit, a validation question, a prototyping approach, or a dev handoff problem — we are happy to discuss your specific case.